The blog is authored by the Building Evidence in Education (BE2) Secretariat, represented by Maria Brindlmayer, in collaboration with the BE2 Steering Committee, composed of FCDO, Jacobs Foundation, UNICEF Innocenti – Global Office of Research and Foresight, USAID, and the World Bank. BE2 is a working group of over 40 bilateral education donors, multilateral education agencies and foundations active in education research. This blog is based in part on a guidance note developed for and by the BE2 working group.

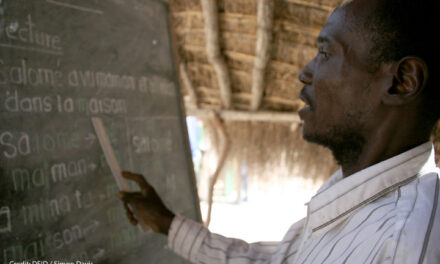

How education programmes are implemented can determine whether an intervention is effective or ineffective. Often, programmes are piloted at a small scale under certain conditions – for example, with sufficient budget to allocate resources per student and easy oversight over processes and staffing. However, the conditions are often quite different when scaling up such a programme. Implementation research can provide valuable information for scaling. Implementation research has long been used in health and other sectors to adapt and finetune interventions after the completion of efficacy trials, but before taking them to scale.

Building Evidence in Education (BE2) defines implementation research as “the scientific inquiry into questions concerning implementation—the act of carrying an intention into effect, which can be policies, programs, or individual practices (collectively called interventions).” It is an “examination of what works, for whom, under what contextual circumstances, and whether interventions are scalable in equitable ways.”

We have too few examples of successful education interventions being implemented at scale and resulting in improved learning outcomes. This has been the basis for thinking across the BE2 network on the role of implementation science in education, and the development of guidance as a first step to understand how education research donors, along with researchers, practitioners and governments may approach implementation research. “Static” projects that are locked for years into a theory of change and indicators developed at the proposal or inception stage, only to find out at the final evaluation that the project was not effective, are frustrating for governments, implementers, researchers and funders. This does not mean that implementation research should replace impact evaluations or similar research, but that it is an excellent complement to impact evaluations, especially to help understand why effects might be bigger in some contexts or among some subgroups.

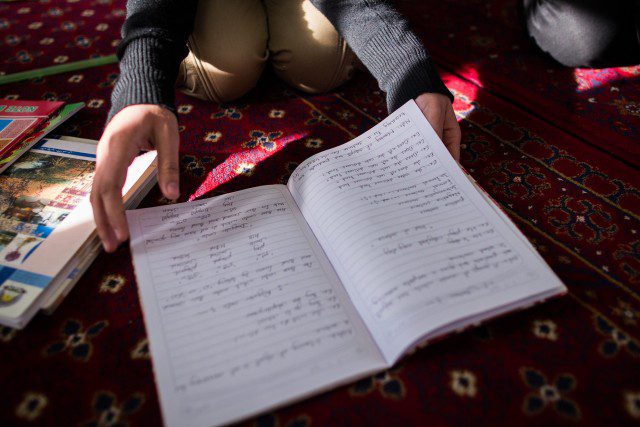

It is not always straightforward to know how to ensure effective implementation in education where desired outcomes depend a lot on individual teaching and learning behaviours. Furthermore, where resources are scarce, it is critical to ensure that information about the effectiveness of an intervention’s implementation is available in time to take corrective action if needed. Implementation research can be an effective tool in this process.

A new guidance note on using implementation research in education recommends that implementation research be used as a proactive learning process, complementary to routine monitoring and evaluation activities, that enables implementers and funders to understand how and why an intervention works. To do this well, partners should intentionally embed research into implementation from the outset, from the initial planning stages of an activity. And the focus of implementation research on why and how an intervention works should also be reflected in an intervention’s theory of change.

The guidance sets out three key factors to consider in comprehensive implementation research:

Examination of stakeholders’ perspectives (including beneficiaries and implementers): how their values, experiences, capacities, and constraints interact with the objectives and process of implementation. Relevant research questions include:

- How can we ensure equitable outcomes for intended beneficiaries, including those in vulnerable circumstances?

- To what extent has behaviour change occurred as anticipated? What incentives are necessary to achieve the desired behaviour change?

Examination of context: how its features may impede or facilitate implementation. Context includes geographic, ecological, and environmental constraints, as well as political and economic systems that structure the opportunities individuals and groups in a society have. Relevant research questions include:

- How can the intervention be adapted to achieve results in diverse, complex local contexts?

- How do shifting policy priorities impact the implementation of the intervention?

- How might we address a lack of public support?

Examination of the intervention: how its component parts interact with the stakeholders and the context in the process of implementation. It also provides an opportunity to validate the theory of change on which the intervention is based. Relevant research questions include:

- What was the degree of fidelity in implementation? (To what extent were relevant stakeholders consulted/included in the design of the intervention? What strategies have been used for roll-out and what have the results been? To what extent have expected resources been available? To what extent have they been utilised as expected? To what extent have they been sufficient?)

As stakeholders determine the research purpose and develop research questions, they may find that sometimes multiple rounds of research will be required to address the questions.

Implementation research is most useful when an intervention’s implementers can make use of the findings to improve the implementation. Making use of the findings requires ongoing learning at an organisational or system level and a willingness to adapt.

It is therefore useful to consider the conditions that facilitate the uptake of implementation research.

- Effective implementation at scale requires long-term engagement working through existing structures, which may or may not have participated in pilot phases of the intervention to test efficacy. Ownership of an intervention, and accountability for its success, can be built through co-design of the implementation or scale-up process, integrating flexibility into the management of the intervention.

- One of the key lessons learned from a wide range of studies of leadership in education is that effective uptake of research requires both strong leadership and an incentive structure that rewards learning.

- Interventions should include provisions for learning activities, including analysis of feedback from stakeholders, sharing of lessons learned with partners, and planning for adaptation based on performance and feedback in prior periods. Learning activities also provide an opportunity to engage stakeholders in reflection and sense-making exercises, or the interpretation of data collected. This is particularly relevant for implementation research in which it is critical to ensure stakeholders remain engaged throughout the process.

Summary:

In short, implementation research is a useful tool to ensure that an intervention achieves the results intended in specific contexts and at scale. When integrated into programme implementation, it can provide early information on barriers or facilitators of effective intervention delivery and allow for adaptation to maximise impact. When incorporated directly into an implementation project and by using a participatory approach, it can also eliminate the barriers that often exist between those that do the research and those that implement the project and can shorten the time lag between when we find out that something has not worked and when we make adaptations. We owe it to our students to use available tools that enable us to deliver the best programmes possible and ensure effective use of education funding.

———————–

BE2 welcomes feedback on the guidance note and examples of applying implementation research. This is a live document and will be updated as new information and examples become available.

Read the complete implementation research guidance note.

Provide feedback: Secretariat@building-evidence-in-education.org